To see the result data of allrecords DataFrame, use the following command. Scala> val allrecords = sqlContext.sql("SELeCT * FROM employee") To display those records, call show() method on it. Here, we use the variable allrecords for capturing all records data. Use the following command for selecting all records from the employee table. Let us now pass some SQL queries on the table using the method SQLContext.sql(). Scala> Parqfile.registerTempTable(“employee”) After this command, we can apply all types of SQL statements into it. Use the following command for storing the DataFrame data into a table named employee. Scala> val parqfile = (“employee.parquet”)

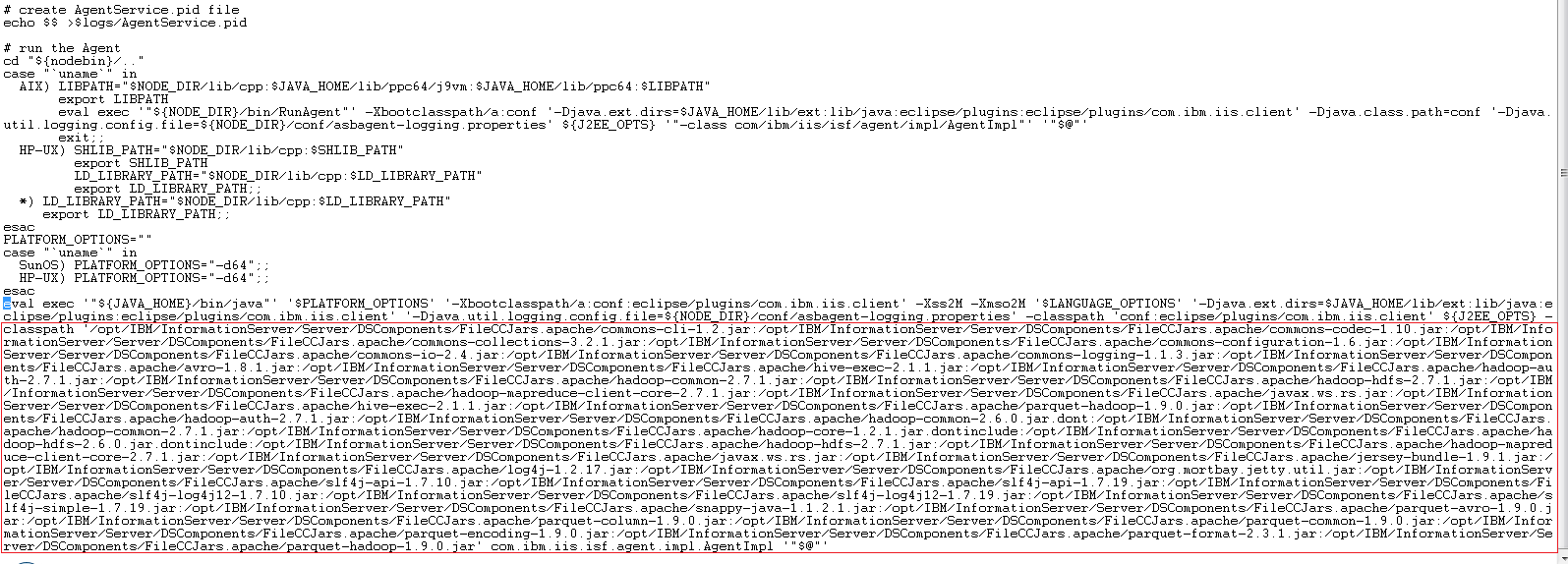

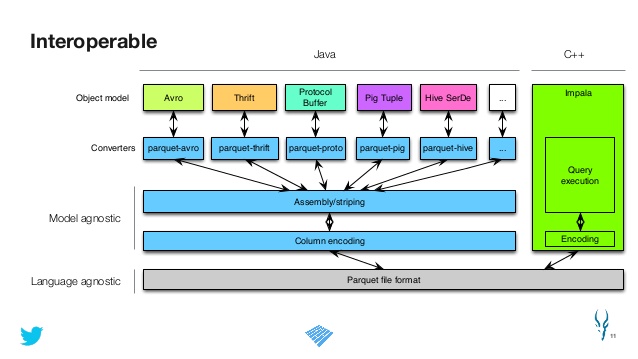

Here, sc means SparkContext object.Ĭreate an RDD DataFrame by reading a data from the parquet file named employee.parquet using the following statement. Generate SQLContext using the following command. Start the Spark shell using following example The following commands are used for reading, registering into table, and applying some queries on it. If you want to see the directory and file structure, use the following command. Compressing the entire file (a) does not work because reading Parquet files requires jumping around to random locations, which is not possible in compressed files, and (b) has essentially no effect because the Parquet files are already compressed internally, and compressing the file twice will not accomplish much. It is a directory structure, which you can find in the current directory. It is not possible to show you the parquet file. Scala> val sqlContext = new .SQLContext(sc) Place the employee.json document, which we have used as the input file in our previous examples. We use the following commands that convert the RDD data into Parquet file. Given data − Do not bother about converting the input data of employee records into parquet format. Let’s take another look at the same example of employee record data named employee.parquet placed in the same directory where spark-shell is running. Like JSON datasets, parquet files follow the same procedure. Spark SQL provides support for both reading and writing parquet files that automatically capture the schema of the original data. The advantages of having a columnar storage are as follows −Ĭolumnar storage can fetch specific columns that you need to access.Ĭolumnar storage gives better-summarized data and follows type-specific encoding. When the format is UTF8 (String), either auto or a explicitly defined format is required.Parquet is a columnar format, supported by many data processing systems. When the time dimension is a DateType column, a format should not be supplied. Specifies if the bytes parquet column which is not logically marked as a string or enum type should be converted to strings anyway. Valid parseSpec formats are timeAndDims, parquet, avro (if used with avro conversion). Specifies the timestamp and dimensions of the data, and optionally, a flatten spec. FieldĬhoose parquet or parquet-avro to determine how Parquet files are parsed

However, parquet-avro was the original basis for this extension, and as such it is a bit more mature. We suggest using parquet over parquet-avro to allow ingesting data beyond the schema constraints of Avro conversion. There may also be some subtleĭifferences in the behavior of json path expression evaluation of flattenSpec. The parquet parser supports int96 Parquet values, while parquet-avro does not. List elements into multi-value dimensions. list-element-records to false (which normally defaults to true), in order to 'unwrap' primitive Json path expressions for all supported types. Logical types should operate correctly with for parquet-avro.īoth parse options support auto field discovery and flattening if provided with aįlattenSpec with parquet or avro as the format.

Selection of conversion method is controlled by parser type, and the correct hadoop input format must also be set in * parquet-avro - conversion to avro records with the parquet-avro library and using the druid-avro-extensions

* parquet - using a simple conversion contained within this extension This extension provides two ways to parse Parquet files: Note: druid-parquet-extensions depends on the druid-avro-extensions module, so be sure to

#PARQUET FILE EXTENSION OFFLINE#

This Apache Druid (incubating) module extends Druid Hadoop based indexing to ingest data directly from offline Table of Contents Apache Parquet Extension